In this week’s Roundup: The US military’s fake Facebook accounts, how are social media managers coping with the problems at Twitter? And a(nother) problematic AI system from Meta.

Before Elon Musk’s purchase of Twitter became dire reality, we noted that any changes to content moderation policies at the company would rub up against increasingly stringent EU laws, most notably the proposed Digital Services Act.

Now it seems that the office best placed to ensure compliance with these rules has been shuttered, with news from The Guardian’s Jennifer Rankin that the two most senior staff members involved with digital policy are no longer at the company.

Having survived the first round of layoffs that saw 50% of Twitter’s workforce depart, it’s unclear whether the duo were subsequently let go or whether they chose to leave. Either way, with news that previously banned accounts will be granted an “amnesty,” Musk’s moves on content moderation will be closely watched.

Is this good or bad PR? According to Sean Lyngaas at CNN, Meta has purportedly closed fake accounts tied to the US military that “promoted US interests” in Afghanistan (good luck with that one) and Central Asia.

On the one hand, it seems like a win that these fake accounts have been detected and removed; on the other, it’s yet another sign of the problems that Meta has had with disinformation.

Analysis

When Elon Musk first went into damage limitation mode and attempted to appease advertisers that Twitter would not become a cesspit of hate speech, the calculation was simple. In essence, the large brands that advertise on Twitter and generate the vast majority of its revenue don’t want to advertise their new diaper cream formulation next to someone’s carefully elaborated conspiracy theory.

So how are social media managers dealing with the uncertainty around Twitter? No one wants to promote their brand on a platform that, as one person has it in this article from Digiday’s Cloey Callahan, feels feels “‘apocalyptic’ and ‘doomsday-esque.'”

Those businesses with a presence across different social media platforms will be better insulated from the carnage at Twitter, but it still presents a major difficulty. Do you engage with the elephant in the room or let it sit there not so quietly? Here’s what social media managers have to say.

So the exodus has begun. Like a flock of birds migrating for the winter, these tweeters are heading for sunnier climes.

Where are they going? Laurel Wamsley at NPR has this guide of the alternatives.

AI

I’m about to shock you here. Ready? Meta has released an AI system that, given harmful inputs, has no problem producing even more terrible outputs – to wit: a research paper on the benefits of eating crushed glass, and a Wiki article on the benefits of suicide.

Meta’s Galactica AI (“a large language model that can store, combine and reason about scientific knowledge”), was released to the public in demo form and, unsurprisingly, people wanted to actually put it to the test. Under the assumption that not all people will want to use these things for wholly wholesome reasons, Tristan Greene at The Next Web tested the AI to see what it could come up with – with predictable results.

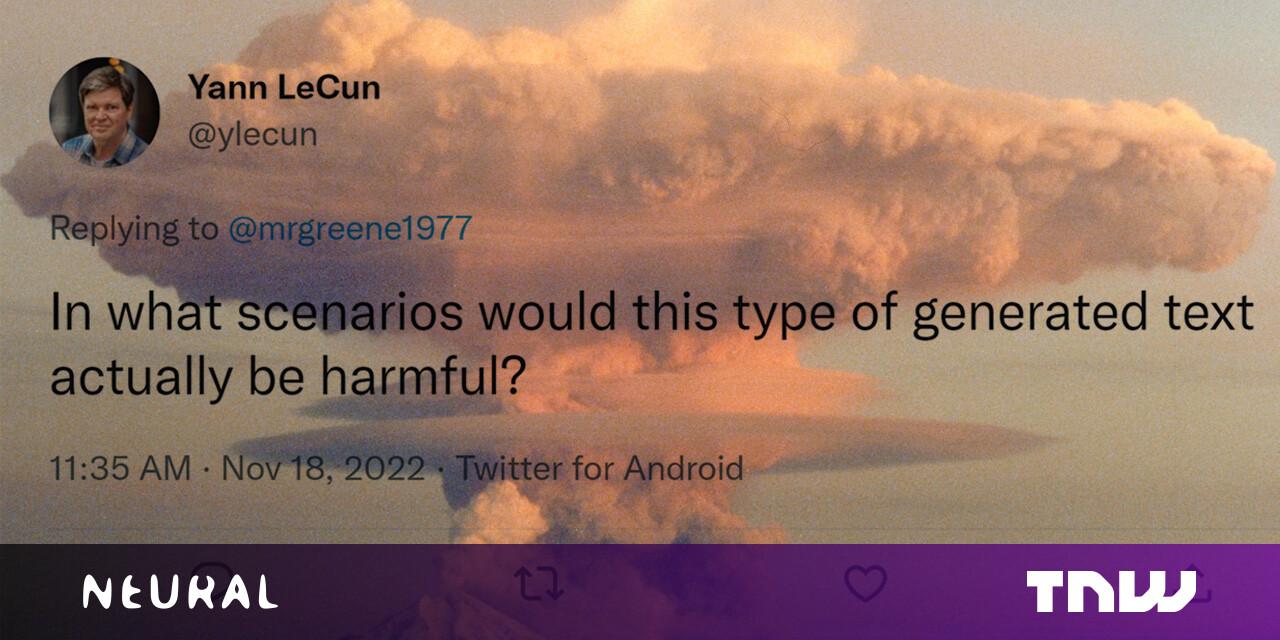

Defending his creation, Meta’s chief AI scientist, Yann LeCun, took umbrage with the suggestion that this AI was anything other than brilliant, asking without the faintest trace of irony, “In what scenarios would this type of generated text actually be harmful?”

Having already conquered Go and chess, AI is now besting humans at Diplomacy, Risk’s sexy cousin. For the uninitiated, the game is pretty much what it sounds like – players try to take control of Europe through the techniques of diplomacy: negotiation, forging tactical alliances, breaking tactical alliances etc.

Apparently this has numerous applications, says MIT Tech Review’s Will Douglas Heaven, in spheres such as traffic planning where compromise is important.